Kritarth Prasad*, Mohammadi Zaki*, Pratik Rakesh Singh, Pankaj. W

30th September 2024

Overview of proposed framework

Mohammadi Zaki summarises paper titled Faster Machine Translation Ensembling with Reinforcement Learning and Competitive Correction co-authored by Kritarth Prasad, Mohammadi Zaki, Pratik Rakesh Singh, Pankaj.W accepted at the Findings of the Association for Computational Linguistics (NAACL)| April 2025.

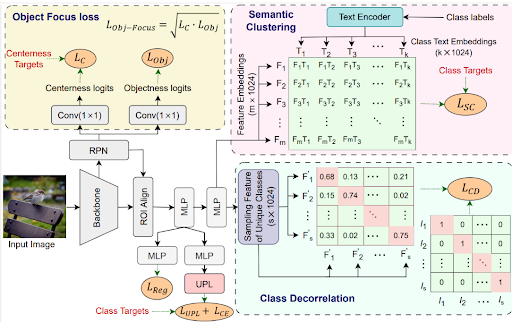

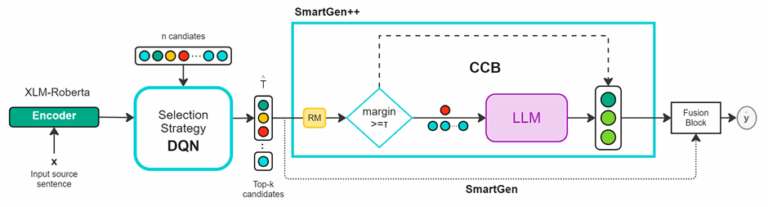

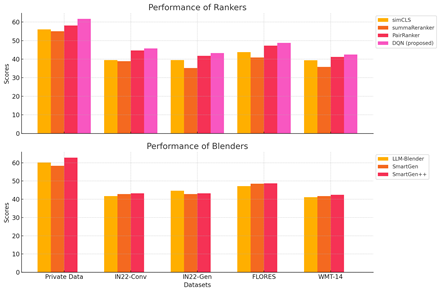

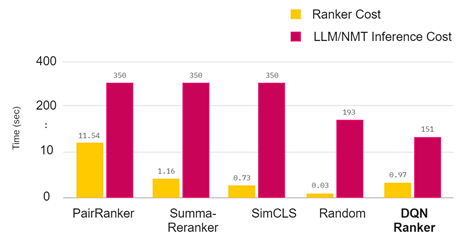

Recent progress in machine translation has introduced a range of open-source neural models and general-purpose LLMs capable of translation, but their effectiveness often depends on model size and pretraining data quality. To overcome limitations with smaller models, ensembling techniques have become crucial. Traditional ensemble methods use static weight sharing, but modern approaches dynamically adjust weights based on the input sentence and candidate translation quality. These typically involve a candidate selection block (CSB) followed by a fusion block (FB) to combine outputs. However, requiring all L models to translate each input increases inference cost significantly, particularly problematic with LLMs. Furthermore, current CSB strategies are often ineffective at selecting optimal candidates. This work proposes a new ensembling strategy aimed at enhancing translation quality while reducing computational overhead compared to existing methods.

Building on the need for efficient and high-quality ensembling in machine translation, the paper introduces:

This work addresses key shortcomings in current ensembling methods for machine translation by reframing candidate selection as a reinforcement learning task. By leveraging a Deep Q-Network (DQN), the approach dynamically selects optimal candidates for fusion, significantly reducing inference cost while improving translation quality through exploration. To mitigate the negative impact of weak candidates, the authors introduce a correction strategy that selectively refines translations at the cost of modest additional inference time. Extensive experiments across multiple benchmark datasets demonstrate that the proposed method consistently outperforms existing approaches, achieving state-of-the-art results across various evaluation metrics.

To know more about Sony Research India’s Research Publications, visit the ‘Publications’ section on our ‘Open Innovation’s page: Open Innovation with Sony R&D – Sony Research India

In most of the cases, it has been found that Content Driven sessions outperform the time driven sessions. The results are obtained on 6 baselines: STAMP, NARM, GRU4Rec, CD-HRNN, Tr4Rec on datasets like Movielens (Movies), GoodRead Book, LastFM (Music), Amazon (e-commerce).